|

|

|

cddt:

Is it scary? The tool is performing exactly as expected. Large language models are not designed to provide accurate answers to questions of fact.

Excuse me?

It's true to an extent. It's the wrapper Perplexity uses as it's UI and UX that sets a wrong expectation with people who don't know how llm's work. It gets easier to get accurate output from them when you understand the limitations and strengths, because then your expectation of what you need from them changes too. Tying genai to Search was a huge mistake, imo, but since big tech only knows how to make money through advertising, they had to go where the users are and shoehorn it into Search products.

If you want to use AI, go for the ones that aren't trying to be all things to all people. That's why I have 4 or 5 separate services that I use for different purposes. I wouldn't go to YouTube to do my internet banking, for example.

The point I keep trying to make is not that these things get stuff wrong, but that they present it in a manner that almost amounts to gaslighting. If you don't already know what is correct, you can very easily be led down a rabbit hole. I think this is irresponsible and potentially dangerous. I don't see why 'information' that is not historically verified cannot be presented with clarifying qualifications. My session with Perplexity actually became something resembling a confrontation. It simply could not accept that its completely wrong conclusion might be incorrect.

Plesse igmore amd axxept applogies in adbance fir anu typos

My response doesn't invalidate your point. Though, I do feel you are conflating what Perplexity as a suite of integrated services is doing, with what the LLM itself is doing.

gehenna:

It's true to an extent. It's the wrapper Perplexity uses as it's UI and UX that sets a wrong expectation with people who don't know how llm's work. It gets easier to get accurate output from them when you understand the limitations and strengths, because then your expectation of what you need from them changes too. Tying genai to Search was a huge mistake, imo, but since big tech only knows how to make money through advertising, they had to go where the users are and shoehorn it into Search products.

If you want to use AI, go for the ones that aren't trying to be all things to all people. That's why I have 4 or 5 separate services that I use for different purposes. I wouldn't go to YouTube to do my internet banking, for example.

Would you be willing to share which services you use for which purpose?

Rikkitic:

The point I keep trying to make is not that these things get stuff wrong, but that they present it in a manner that almost amounts to gaslighting. If you don't already know what is correct, you can very easily be led down a rabbit hole. I think this is irresponsible and potentially dangerous. I don't see why 'information' that is not historically verified cannot be presented with clarifying qualifications. My session with Perplexity actually became something resembling a confrontation. It simply could not accept that its completely wrong conclusion might be incorrect.

Yes, I am inclined to agree. It's fine to be wrong. But insisting you are correct when you aren't and not citing sources or denoting your information is only current to a particular date is inexcusable.

The average person using AI (esp if paying) is not going to understand the technology to the level some of us may, and as such safeguards need to exist, and one way to handle this, is the above.

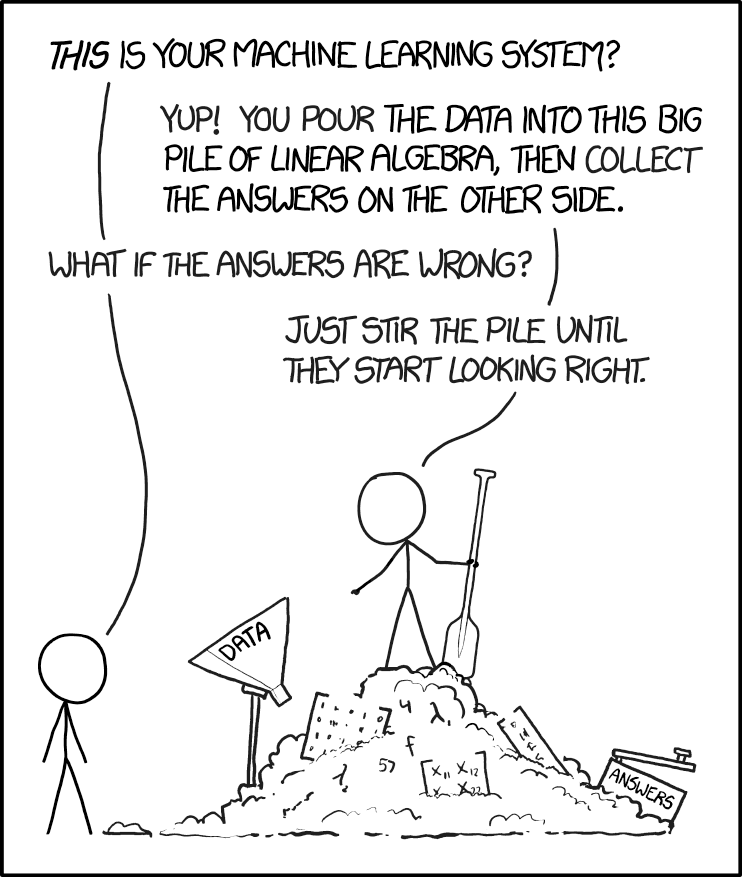

They're all just a giant f'ing matrix. That's all.

|

|

|