|

|

|

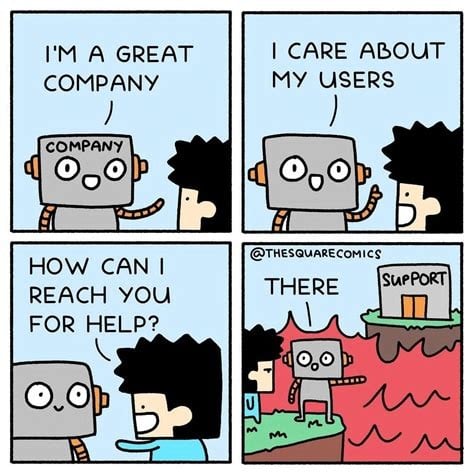

kingdragonfly: "Don't Call It Slop!" - The Corporate AI Dumpster Fire

...

If they prefer another four-letter word starting with S then I'm happy to oblige.

New Zealanders, on average, have one testicle.

The other day I saw something to the effect of "they call themselves 'prompt engineers' but they're really sloperators".

Behodar:

The other day I saw something to the effect of "they call themselves 'prompt engineers' but they're really sloperators".

Tinkerisk:

So, confirming that I've eventually caught up, either the second comment is sloppy, or it arises out of an awful lot of spying.

kingdragonfly: "Don't Call It Slop!" - The Corporate AI Dumpster Fire

I engage occasionally with duck.ai. I asked it to unpack some acronym. It produced a plausible answer. I asked for a citation. It produced links to the tops of websites. I asked for a specific citation. It produced irrelevant links.

Eventually, we agreed that it had probably constructed the answer from training data. I asked for a copy of the relevant training data. It can't see its training data.

Here's a sketch model for trustworthy AI web-search assistance:

- it can see its training data, as if it was another website

- it has been cautioned successfully to know that it could be wrongly biassed toward believing its training data too much

- when it and its user agree that it has made a mistake, it can escalate a complaint against some of the training data, so that its successor can be trained differently

In order for this to be real, the training data will have to stop being a mess of copyright violations.

I wonder ignorantly if this is a bit like what shodan means in martial arts:

https://en.wikipedia.org/wiki/Shodan_(rank)

that someone has finally learned enough to actually understand what they were trained to do.

Tinkerisk:I saw a prediction to expect "zero-day storms". That's to say, instead of a mere CVE being discovered every few days for a vendor, imagine multiple 0days every day across multiple vendors.

- Hackers attack with the help of AI.

- Admins defend with the help of AI.

- Admins must arm themselves against as many attacks as possible (impossibly all of them).

- Hackers only need to find a single point of attack.

- This race goes on forever.

Therefore, one should behave as if there is always someone on the LAN (WiFi!) reading along, even if this is not actually the case, and there is no need to be paranoid.

I've wondered if we'd start to something akin to a VPN sign in page on every website you access where you need to identify yourself as a legit visitor to a safe, minimal content landing page that then provides access to the site(s) you're intending to visit. Once you've identified yourself, all your traffic is then sanitized before it can make any queries to databases.

jfw01:

Tinkerisk:

So, confirming that I've eventually caught up, either the second comment is sloppy, or it arises out of an awful lot of spying.

According to the thread’s subject, I would say … the latter. 😁

MadEngineer:

I saw a prediction to expect "zero-day storms". That's to say, instead of a mere CVE being discovered every few days for a vendor, imagine multiple 0days every day across multiple vendors.

For every attack strategy, there is a counter-strategy... hence the race.

Has anyone else found Copilot App Skills in Excel to be unreliable? This is the first time I'm really giving Copilot App Skills a try. My M365 account is licensed for Copilot for 365.

I'm opening a 250 line supplier billing CSV file and had Copilot for 365 help me generate a prompt to transform the file into something that I can upload to our quoting software so we have up to date pricing for our Sales Admin to use in quotes. Across a dozen tests, Copilot actually only completed the request half the time. The other half it only did part of the job and then errored and stopped.

I tried the same prompt with ChatGPT Pro, uploading the source file at the same time. Got a good result in less than half the Copilot processing time with no issues.

“Don't believe anything you read on the net. Except this. Well, including this, I suppose.” Douglas Adams

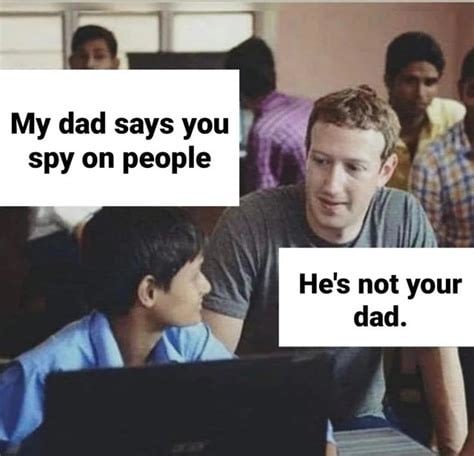

So OpenAI ChatGPT is adding advertising to your experience, but don’t worry they promise their answers won’t steer you towards higher revenue for them…

https://openai.com/index/our-approach-to-advertising-and-expanding-access/

Key question - how would we know/how could you check?

Jon

Website: herri.es Linkedin: jonherries Products: Gauge - Bluetooth TPMS for iOS OHCE - Testing your AI

Dynamic:

Has anyone else found Copilot App Skills in Excel to be unreliable? This is the first time I'm really giving Copilot App Skills a try. My M365 account is licensed for Copilot for 365.

I'm opening a 250 line supplier billing CSV file and had Copilot for 365 help me generate a prompt to transform the file into something that I can upload to our quoting software so we have up to date pricing for our Sales Admin to use in quotes. Across a dozen tests, Copilot actually only completed the request half the time. The other half it only did part of the job and then errored and stopped.

I tried the same prompt with ChatGPT Pro, uploading the source file at the same time. Got a good result in less than half the Copilot processing time with no issues.

No real surprise here, it is like you are asking a BA-English honors student to do high school math. The answers are often not going to be pretty or add up (the hint is in the name: Large Language model).

They can be quite good at helping with suggesting excel formulae, but regular basic math isn’t a statistics exercise (unless you are a Bayesian ;)

Website: herri.es Linkedin: jonherries Products: Gauge - Bluetooth TPMS for iOS OHCE - Testing your AI

jonherries: Key question - how would we know/how could you check?

jonherries:

Dynamic:

Has anyone else found Copilot App Skills in Excel to be unreliable? This is the first time I'm really giving Copilot App Skills a try. My M365 account is licensed for Copilot for 365.

I'm opening a 250 line supplier billing CSV file and had Copilot for 365 help me generate a prompt to transform the file into something that I can upload to our quoting software so we have up to date pricing for our Sales Admin to use in quotes. Across a dozen tests, Copilot actually only completed the request half the time. The other half it only did part of the job and then errored and stopped.

I tried the same prompt with ChatGPT Pro, uploading the source file at the same time. Got a good result in less than half the Copilot processing time with no issues.

No real surprise here, it is like you are asking a BA-English honors student to do high school math. The answers are often not going to be pretty or add up (the hint is in the name: Large Language model).

They can be quite good at helping with suggesting excel formulae, but regular basic math isn’t a statistics exercise (unless you are a Bayesian ;)

In my case there was no math involved, though I appreciate that computer processing is math-ish in the background.

My requirements were to do a number of find-and-replace operations, if column Y contains MONTHLY then append '-MONTHLY' to the text in column D, remove duplicate entries in column C keeping only the row with the highest numeric value in column F, delete columns H through X. The original file had around 250 lines and 30 columns. The resulting file would normally have around 25 lines and 13 columns. No formatting or calculations as we'll be saving this price list as a CSV to import into the quoting software. Boring, repeatable stuff that I thought Excel Copilot App Skills would do predictably and repeatably.

Again, my biggest surprise was simply failing to finish the job (erroring out) around 50% of the time. A dozen tests across a 36 hour period.

“Don't believe anything you read on the net. Except this. Well, including this, I suppose.” Douglas Adams

Yep, interesting, but still not surprising. You should probably be running a custom instance for that in AWS or Azure, licence one of the models, then you can set the system instructions, model parameters and add a vector store (which you can’t with retail services) and then your accuracy should be pretty high (like 95% +).

On pay as you go it will be 5-10c per go (but probably less depending on optimisation of token consumption).

I think this is what most people have missed, copilot is just a “safe chatgpt” not a production service, the way I think of it is - using copilot to solve a problem works where there is no SOP or agreed standard for quality. As soon as there is - everyone’s different ways of doing thing rapidly means making slop.

This is the same reason we enforce things like input masks on fields, so for example dates are inputted in the same way every time and are able then to be specified as dates in the database. Im sure everyone has seen the “dd.mm.yyyy” or “ddd mmm” etc approaches people use in Excel.

Jon

Website: herri.es Linkedin: jonherries Products: Gauge - Bluetooth TPMS for iOS OHCE - Testing your AI

|

|

|