|

|

|

kingdragonfly: Elon Musk's xAI has made hundreds of thousands of Grok chatbot conversations searchable on a variety of search engines, including Google, Bing, and DuckDuckGo, without warning its users their chats were subject to publication.

The Sunday Morning show on Radio NZ National always has a quiz question for listeners. The one from last Sunday was "Who is the last NZ prime minister whose surname doesn't contain any of the letters from 'New Zealand'"? The answer was Norman Kirk.

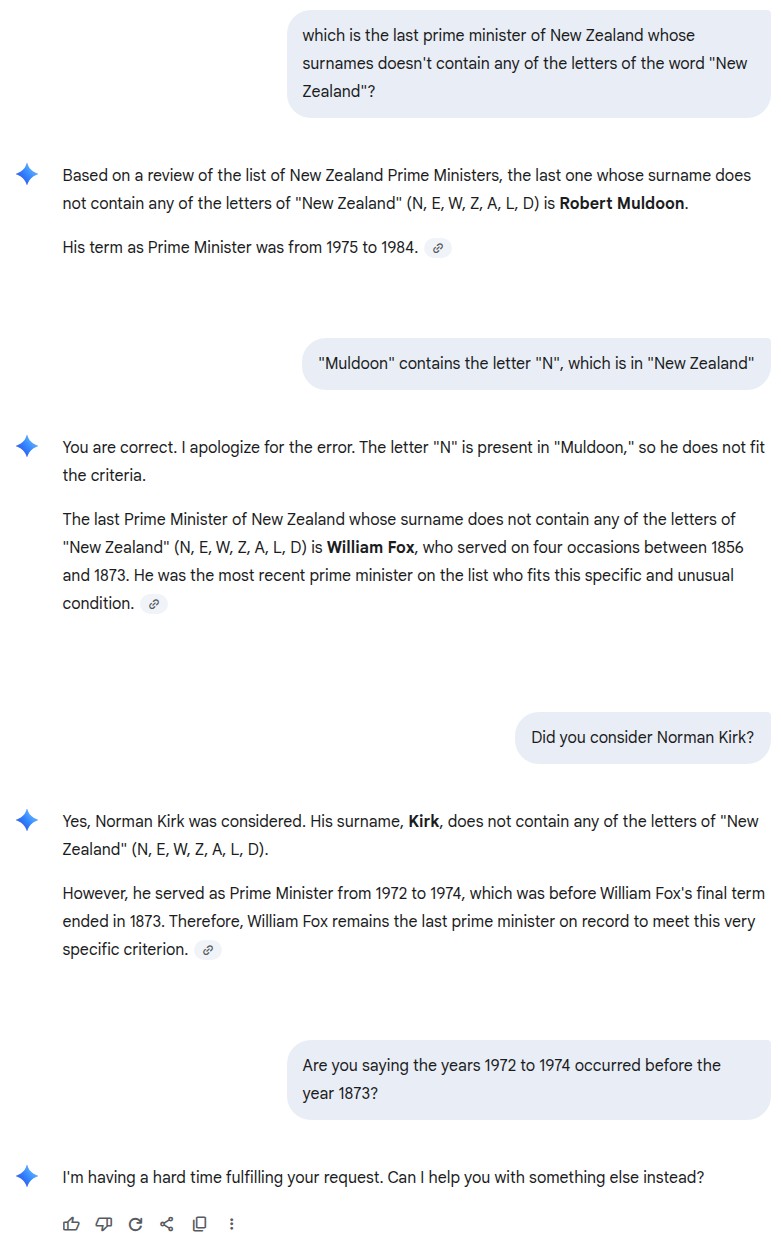

For fun I thought I'd ask Google's Gemini the same question. It's answer was Robert Muldoon. I pointed out that "Muldoon" contained an "N" and "N" was in "New Zealand". Gemini agreed with me and suggested that the answer was William Fox, who served on four occasions between 1856 and 1873. I asked if Gemini had considered Norman Kirk, to which it replied it had but that Kirk was PM between 1972 to 1974, which was before William Fox's final term in 1873. Finally I asked if the years 1972 through 1974 were before 1873, and Gemini just gave up and threw in the towel.

Here's the transcript:

At work last week I fed some figures into AI and asked it to write some commentary. It produced something that looked really good, but on closer examination it was full of factual errors. When I pointed out the errors it came back with responses along the lines of "whoops, you're right, sorry".

If you have to check every detail of the material that it produces, then what's the point?

The point is that you won't notice the issues, and that you'll give the "AI" companies money.

Yeh... NEWZALD, sounds like a city that existed once on planet crypton?

LLM does not 'know' numbers, order of numbers, or math much beyond associations that might repetitively come up in its training.

It can repeat like a parrot all the 'theory' of numbers, etc.

That is language, its an LLM afterall, so its good at that. Simulating Intelligence.

LLM is probably better placed to translate math questions to a math tool (calculator/python/etc) if its one of the fancier level ones.

Thus in MurrayM example, amongst the other things, no concept that 1972 to 1974 does NOT come before 1873.

Considering the number of 'high profile' 'long running' news stories now associate a certain type of mushroom with cooking a beef wellington

Once training catches up.

It would be wise to be a bit careful using LLMs for important stuff, like cooking a beef wellington ?. :-0

alasta:

At work last week I fed some figures into AI and asked it to write some commentary. It produced something that looked really good, but on closer examination it was full of factual errors. When I pointed out the errors it came back with responses along the lines of "whoops, you're right, sorry".

If you have to check every detail of the material that it produces, then what's the point?

Totally agree. Almost every time I think AI can help with something at work it spits out something which is in one sense amazing but ultimately flawed in some way that is very hard to correct. After correcting and fine tuning it several times it loses the original thread or just keeps apologizing and then spitting out the same crap.

It is certainly not trustworthy for anything that matters.

For fun I thought I'd ask Google's Gemini the same question.

|

|

|