Not sure where to put this in the forums but FYI, seems Cloudflare DNS is down.

|

|

Doesn’t help when they stop announcing 1.1.1.0/24 in the global routing table.

It’s coming back now.

Michael Murphy | https://murfy.nz

Referral Links: Quic Broadband (use R122101E7CV7Q for free setup)

Are you happy with what you get from Geekzone? Please consider supporting us by subscribing.

Opinions are my own and not the views of my employer.

About to say It works for me.

I'd just rebooted the router and was using Geekzone to see if the interwebs was back up, and happened to see this post straight away which is ideal!

Thought it was my router acting up initially,

BGP routing got changed, they started being announced by an AS4755.

EDIT: Sorry, found the correct information, AS4755 Tata, not the one I posted originally.

michaelmurfy:

Doesn’t help when they stop announcing 1.1.1.0/24 in the global routing table.

It’s coming back now.

Fixed pretty quickly in that case, I always imagined BGP routes took time to converge/take effect

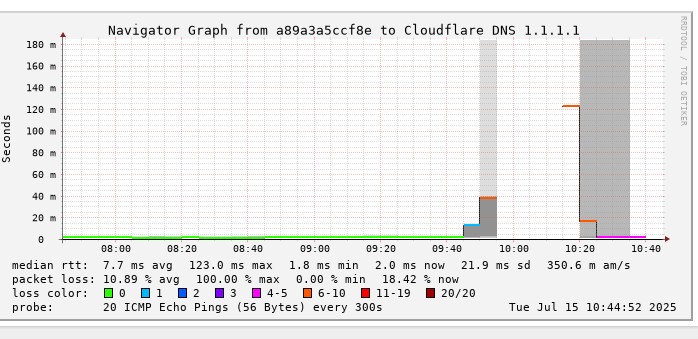

Took somewhat ~50 mins from when it saw issues to back.

I got the alert from my uptimeKuma and thought damn, my connection is down in middle of customer call. Lucky was using multiple DNS provider upstream from AG too :D

Their own BGP issues monitoring picked it up: https://radar.cloudflare.com/routing/anomalies/hijack-107469 lol

Good old Geekzone squad able to confirm outages 👍.

Seems to be back up running for the past 10 minutes.

Too bad I couldn't login to Geekzone, kept getting Cloudflare reCaptcha errors, for obvious reasons.

SneakerPimps:

Good old Geekzone squad able to confirm outages 👍.

Seems to be back up running for the past 10 minutes.

FWIW at the time of this post I am seeing 1.1.1.0/24 at the following entry points to our network:

NZIX RS

EdgeIX RS

NSWIX RS

Cloudflare bilaterals

Hurricane Electric

Cogent

All of the above have an origin ASN 13335

So based on our local view it looks like it should be back.

WFH Linux Systems and Networks Engineer in the Internet industry | Specialising in Mikrotik | APNIC member | Open to job offers | ZL2NET

I am surprised after so long BGP still allows providers to advertise ranges that don't belong to them.....

Just to clear up any confusion circulating around the BGP "hijack" of 1.1.1.0/24...

Tata Communications/AS4755 was always (incorrectly) announcing 1.1.1.0/24, however due to RPKI on 1.1.1.0/24, this route was marked invalid by anyone validating RPKI (and their downstream ASNs). You can validate this here: https://rpki.cloudflare.com/?view=validator&validateRoute=4755_1.1.1.0%2F24

The real issue was Cloudflare did indeed withdraw 1.1.1.0/24 from the global route table accidentally. This resulted in the route from Tata propagating more than it usually would, as their route was then the only route available in the global table.

Even looking at the "hijack" event, you can see that the percentage of peers observing the route is only 2%. These are peers who clearly aren't performing any RPKI validation.

Technically, yes it was a BGP route hijack, however was a symptom of the issue, not the cause. In any case, I think Tata have now been given a sufficient slap on the wrist! 😆

One interesting thought though: The fact that seemingly everyone in New Zealand was impacted by this is somewhat a good thing. This proves that the provider you're with, and/or their upstreams, are performing RPKI validation, and dropping invalid routes - a great thing!

In terms of the actual cause, Cloudflare have historically been very good at posting about errors and learnings made, so we'll have to wait for this one to come to light!

My views are as unique as a unicorn riding a unicycle. They do not reflect the opinions of my employer, my cat, or the sentient coffee machine in the break room.

Incident report is out

https://blog.cloudflare.com/cloudflare-1-1-1-1-incident-on-july-14-2025/

TL:DR

The outage occurred because of a misconfiguration of legacy systems used to maintain the infrastructure that advertises Cloudflare’s IP addresses to the Internet...

... On June 6, during a release to prepare a service topology for a future DLS service, a configuration error was introduced: the prefixes associated with the 1.1.1.1 Resolver service were inadvertently included alongside the prefixes that were intended for the new DLS service. This configuration error sat dormant in the production network as the new DLS service was not yet in use, but it set the stage for the outage on July 14 ....

...A configuration change was made for the same DLS service. The change attached a test location to the non-production service; this location itself was not live, but the change triggered a refresh of network configuration globally.

Due to the earlier configuration error linking the 1.1.1.1 Resolver's IP addresses to our non-production service, those 1.1.1.1 IPs were inadvertently included when we changed how the non-production service was set up.

The 1.1.1.1 Resolver prefixes started to be withdrawn from production Cloudflare data centers globally..... ( July 14 21:48 UTC )

... Once our configuration error had been exposed and Cloudflare systems had withdrawn the routes from our routing table, all of the 1.1.1.1 routes should have disappeared entirely from the global Internet routing table. However, this isn’t what happened with the prefix 1.1.1.0/24. Instead, we got reports from Cloudflare Radar that Tata Communications India (AS4755) had started advertising 1.1.1.0/24: from the perspective of the routing system, this looked exactly like a prefix hijack. This was unexpected to see while we were troubleshooting the routing problem, but to be perfectly clear: this BGP hijack was not the cause of the outage....

...We reverted to the previous configuration at 22:20 UTC. Near instantly, we began readvertising the BGP prefixes which were previously withdrawn from the routers, including 1.1.1.0/24. This restored 1.1.1.1 traffic levels to roughly 77% of what they were prior to the incident.

Clint

|

|